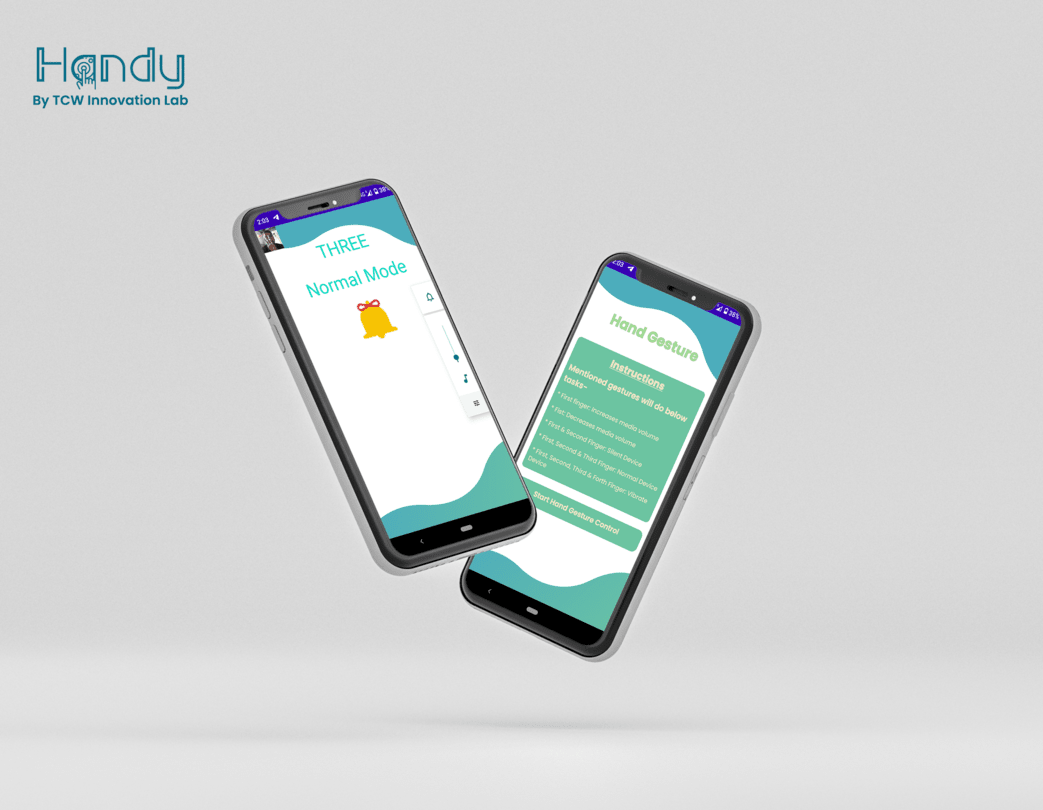

Handy

An app to detect hand gestures and perform operations.

An app to detect hand gestures and perform operations.

An app to detect hand gestures and perform operations.

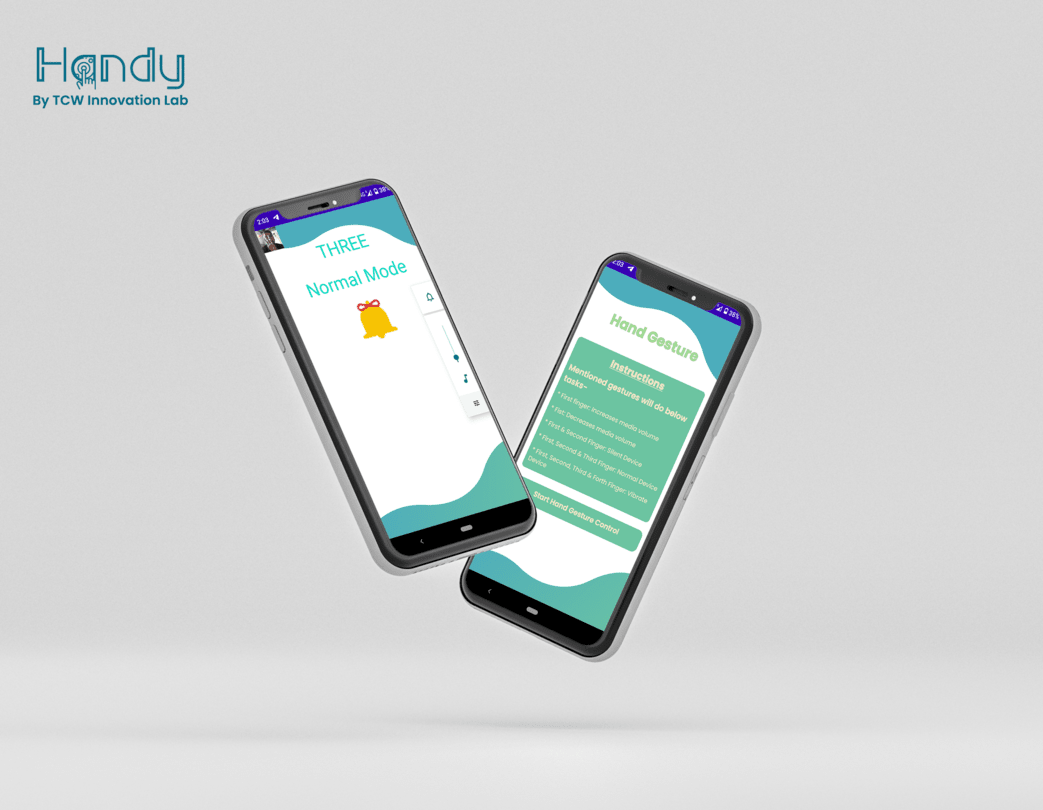

Handy is a hand gesture-detecting App that is a product of brainstorming by our innovation team. The goal is to recognize different gestures of the fingers & thumbs of our hand. We recognize gestures such as ONE, TWO, THREE, FOUR, FIVE, SIX, YEAH, ROCK, Fist, and OK which are done by hand fingers, and based on these gestures phone volume can be increased/decreased and other actions also. We can change the sound mode of our smartphones such as Normal, Silent, and Vibrate using gestures.

We started building the app from scratch based on MediaPipe using the MVP approach as an Innovation Project.

After a few rounds of brainstorming sessions, we decided to keep it simple and solid.Our Innovation team planned this project to move objects and control our smartphone using touchless hand gestures.

We started working on Handy by,

Our main challenge was detecting finger gestures in Java which was overcome by using MediaPipe. We plot landmarks on the hand to be detected. We faced an issue while detecting landmarks of fingers.

After achieving the required functionality, We tried to remove the camera preview which is used while detecting the fingers so that we can utilize the screen for any other view but as soon as we removed the preview fingers were not detected. In the end, we reduced the size of the camera preview and achieved the requirement. After a little bit of research now we can detect our gestures without showing a Camera preview.

Our next step is to control the screen without touching the display using finger gestures.

The development approach for the project was to achieve the requirement of detecting the fingers.

We are able to successfully develop the app as per initial requirements and work to scale it to the next stage. The scope of using the product lies in many live streaming platforms where the devices get fixed at a position and the user can easily perform gestures to control the app. Many more ideas can be explored around this by entrepreneurs and creators and we would love to be a part of their startup and contribute to their project.

Architecture-first design with clear separation of concerns, scalable data models, and secure integration points. Built for reliability and future extension.

Delivered on time with a stable, production-ready system. The platform supports ongoing iteration and scale as business needs evolve.

We deliver production-grade platforms with architecture-first thinking and AI-accelerated engineering.